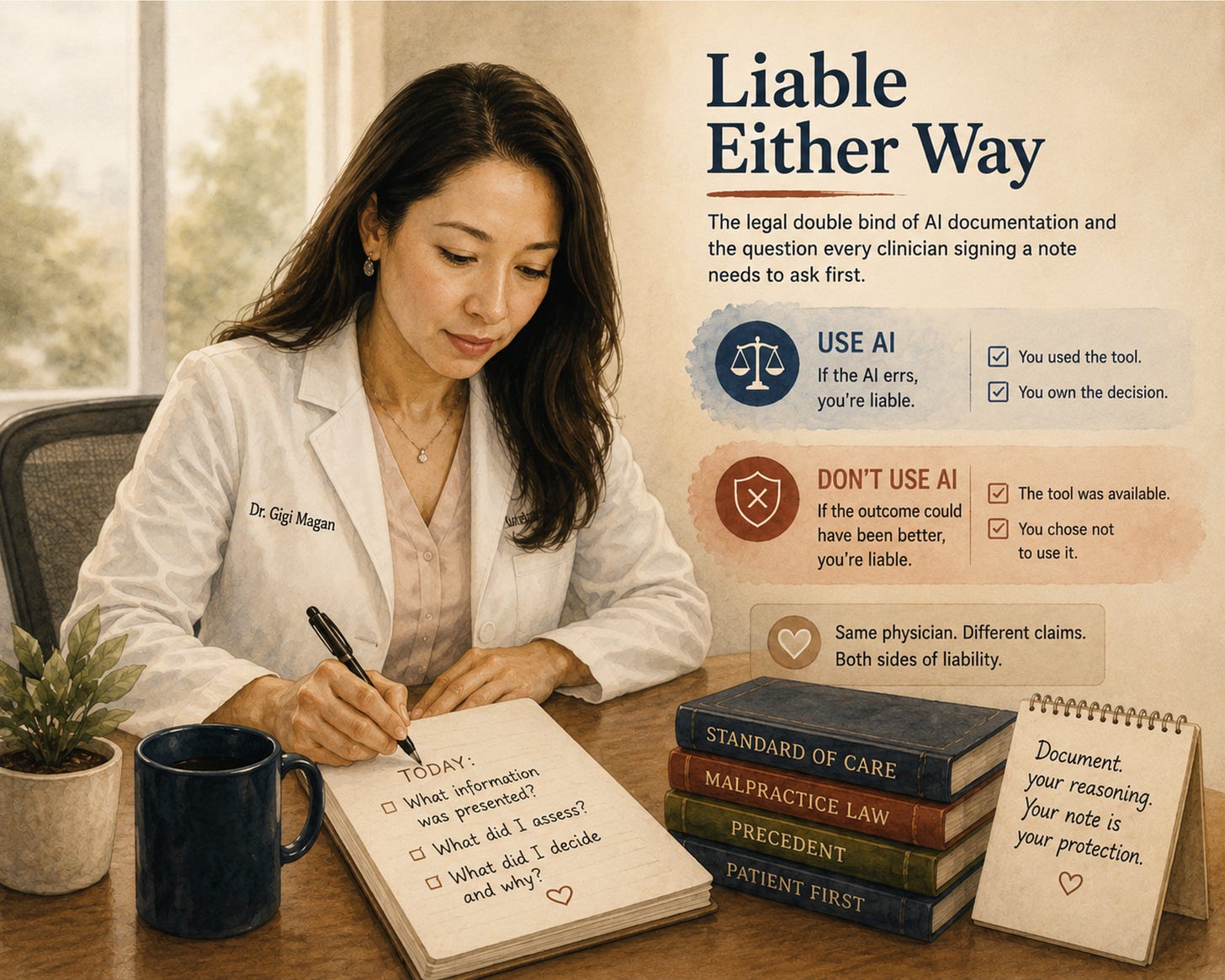

Liable Either Way

The legal double bind of AI documentation and the question every clinician signing a note needs to ask first.

The risk calculus changed for me at a dinner table. Not a courtroom.

Six professionals. A meal. The conversation turns to AI in medicine. A malpractice attorney, seated two chairs over, sets down her fork and says it plainly: physicians will be liable for using AI and for not using it.

The table goes quiet.

Both directions. Same physician. Different claims.

She was not speculating. She was describing how malpractice law evolves when a new standard of care emerges. The current evidence base is messier than the headlines suggest. Some studies show large language models outperforming physicians on diagnostic reasoning when working alone. Others show no significant improvement when an LLM is added to a physician’s workflow. The collaborative version, physician plus AI, performs best in specific conditions and worse in others.

Mixed evidence is exactly the soil malpractice law grows in.

What Actually Changed

In a 2024 JAMA Network Open randomized trial led by Goh and colleagues, ChatGPT-4 alone outperformed physicians on clinical reasoning vignettes. The same study found that adding the LLM to physicians’ workflows did not significantly improve their diagnostic accuracy. A 2025 systematic review of 83 studies found no overall performance gap between AI and physicians, with substantial variability by condition and specialty. Late-2025 meta-analyses suggest LLM assistance can improve physician diagnostic accuracy under specific conditions, though the conditions for successful assistance remain unclear.

The picture is not “AI plus physician is always better.” The picture is: the tools are sometimes better than us, sometimes equal, sometimes worse, and we are responsible for knowing which.

Malpractice law does not wait for the field to converge. It follows what a reasonable physician would do given the available tools. If AI is sometimes outperforming physicians and sometimes not, opting out of using it at all creates a defensible basis for a claim. So does using it carelessly.

This is not a technology problem. This is a liability architecture problem. And it is forming in real time, without formal guidance, without training, and without most clinicians being aware of it.

Where Risk Shows Up First

A close friend of mine did this last year. Her blood counts had been mildly abnormal for nearly a decade. She had been seen once a year, monitored conservatively, told her labs were stable, and sent on. Symptoms accumulated. Cramping. Palpitations. The kind of small daily questions that erode trust without ever rising to the level of a full workup.

She got tired of waiting. She uploaded her entire medical record into a large language model, prompted it to look at the trajectory of her labs against her symptoms, and asked what a specialist might consider.

The model returned a working differential, named a specific hematologic diagnosis, and recommended a workup that included a bone marrow biopsy. She sent the output to her physician. Her physician spent the better part of an hour and a half reviewing it. The next appointment was longer, more thorough, and produced the workup she had been asking for.

She is undergoing the biopsy now. She is nervous about it. She is also, for the first time in a decade, being heard.

She told me her story because she trusted me with it. She also gave me permission to share it with you. I am protecting her details. I am not protecting the pattern.

Published cases now show the same pattern. AI-generated analysis leading to earlier diagnosis. Years of conservative monitoring giving way to a full workup only after the patient arrived with algorithm-supported reasoning.

When a patient brings AI-informed evidence to an appointment and the physician dismisses it, the documentation trail now includes what was surfaced and when. The question a plaintiff’s attorney will ask is: you were presented with this information. What did you do with it?

Why This Creates Ethical Tension

The double bind is structural.

If a physician uses an AI tool and the tool errs or hallucinates, the physician is responsible for the error. The physician signed the note. The physician ordered the test or chose not to. The tool does not share liability.

If a physician does not use an AI tool and the outcome would have been different with it, the physician is responsible for the omission. The tool was available. The evidence supported its use. The physician chose not to engage it.

Both sides face the same person: the clinician.

No malpractice framework accounts for shared responsibility with an algorithm. No residency training prepares clinicians to hold this risk. Every decision, every note, every referral becomes part of the clinical record. The chart is our primary legal protection. And most of us are building it in real time, without formal guidance.

Equity Lens

Patients with digital literacy, portal access, and the confidence to challenge clinicians are already using AI to push their care forward. My friend is one of them. Most of my panel is not.

I think of one patient I see regularly. Monolingual Spanish-speaking. No portal account. She has never used a large language model. Her granddaughter, who lives in another county, has. The diagnostic acceleration available to one of them is not available to the other.

In safety-net settings, patients face language barriers, limited portal access, and fragmented records. AI-augmented self-advocacy is far less feasible for the populations most likely to experience diagnostic delays.

This creates a secondary inequity. The malpractice framework assumes the patient had access to the same tools. In practice, AI-informed patients accelerate their care while others remain in the same diagnostic timelines. The liability architecture does not account for the access gap.

How I Now Think About This

I hold the double bind without pretending it resolves.

When a patient presents AI-generated information, I treat it as a data point. Not a directive. Not a disruption. I ask: what data did the model see? What assumptions is it making? What is the downside of acting on this versus not acting on it?

I document the conversation. I document what was presented and how I responded. Not because I trust the algorithm. Because the documentation trail is where liability lives.

When I taught medical students this material, I told them to use LLMs that ground their answers in the medical literature and cite their reasoning, not the ones that generate fluent prose without a citation trail. I taught prompt engineering as a clinical skill. The legal framework is catching up to what the curriculum has already started naming.

I now think about every AI interaction in clinical terms the same way I think about any diagnostic input: with structured skepticism, explicit reasoning in the chart, and awareness of what a reviewer would see two years later.

Digital Health Pearls

The liability question has two faces. Liability for AI-related errors is one. Liability for not using AI when it was available is the other. Both face the clinician.

When a patient brings AI output to a visit, treat it as clinical information. Document what was presented, what you assessed, and what you decided. The chart is the legal record.

Not all LLMs are the same. Tools that ground their reasoning in clinical guidelines and the medical literature behave differently from tools that generate confident prose without a citation trail. Knowing which one your patient consulted, and which one your colleague is recommending, is part of the new clinical literacy.

The standard of care does not shift by announcement. It shifts by accumulation. Published studies, institutional adoption, peer practice. It is shifting now.

TL;DR

Malpractice risk is forming around AI from both directions: using it and not using it. The research evidence is mixed and accumulating in multiple directions at once. No formal guidance exists. No training covers it. The clinician holds the liability in both scenarios. Document your reasoning. Treat AI-informed patient input as clinical data. The standard of care is moving, and the legal framework is following.

Compare Notes

The next time you sign an AI-generated note, notice the pause before your signature. The pause is the double bind.

Forward this to one colleague who uses an AI scribe. Ask them: has anyone at your institution named the liability exposure on either side? If the answer is no, you are both operating inside a framework nobody has explained to you yet.

The conversation at the dinner table will eventually reach the courtroom. The question is whether clinicians shape it first.

Disclaimers: All views expressed are my own and do not represent my employer or any institution I am affiliated with. Any tools, products, or technologies mentioned are included for educational purposes only and are not sponsored or endorsed. Nothing in this piece should be interpreted as medical advice.